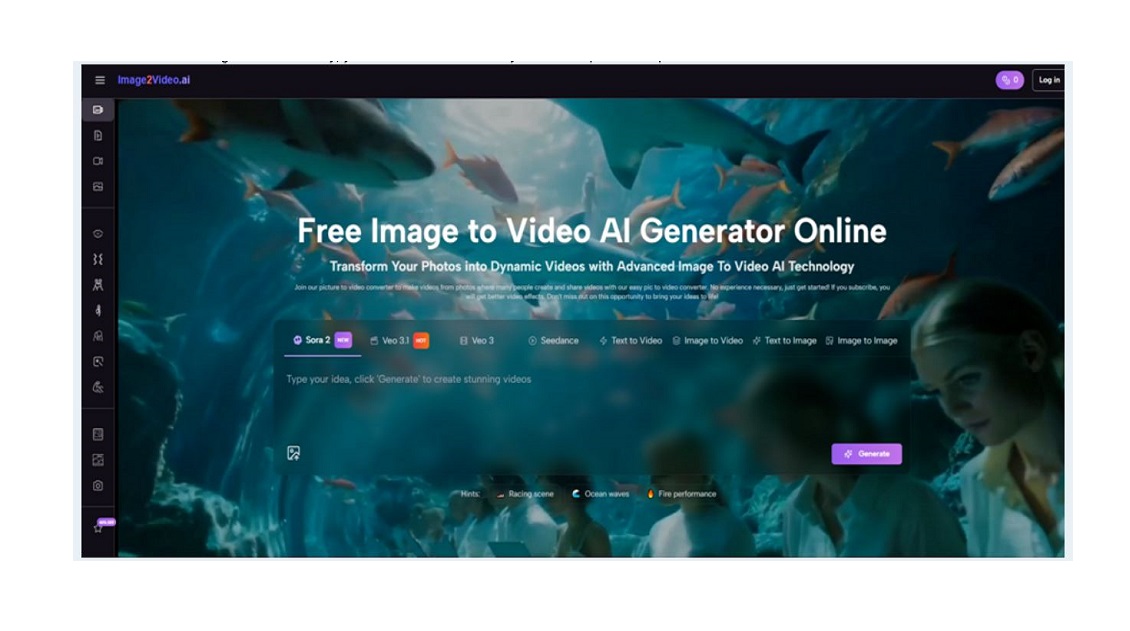

There is a quiet frustration in visual creation that rarely gets discussed. You can imagine a moving scene clearly in your head, but translating it into a finished video often requires tools that feel disconnected from that original idea. What I noticed when trying Image to Video AI is that the process removes that translation step almost entirely.

Instead of constructing motion manually, you describe it—and the system attempts to interpret.

Why Editing Is No Longer The Default Starting Point

The assumption that video must begin in an editor is slowly changing.

Creation Is Becoming Descriptive

Rather than building:

- timelines

- layers

- transitions

you describe:

- motion

- atmosphere

- intent

This changes both speed and accessibility.

The Cost Of Precision Tools

Traditional tools offer precision, but:

- require time investment

- require learning

- slow down iteration

For early ideas, that cost can be unnecessary.

How Motion Emerges From A Single Frame

The process is not animation in the traditional sense.

From Static Composition To Dynamic Interpretation

The Image Defines Boundaries

The uploaded image determines:

- subject position

- perspective

- visual balance

The system does not rebuild the scene—it evolves it.

Prompt As Behavioral Guide

The prompt influences:

- how elements move

- how the camera behaves

- how lighting feels

It acts more like direction than instruction.

Model Choice Shapes Output Style

Different models appear to influence:

- realism vs stylization

- motion fluidity

- consistency

This is noticeable even without exposed technical controls.

Actual Workflow Without Hidden Complexity

The process follows a straightforward structure.

Three Core Steps In Practice

Step 1 Provide Source Image

Upload a photo to define the base visual context.

Step 2 Describe Desired Motion

Add a prompt that explains movement, tone, and style.

Step 3 Generate Final Video

Wait for processing, then review and download the output.

There is no intermediate editing layer.

Comparing Two Creative Approaches

| Dimension | AI Generation Flow | Manual Editing Flow |

| Speed | High | Low |

| Control Detail | Medium | High |

| Skill Requirement | Low | High |

| Iteration Ease | High | Low |

| Output Consistency | Variable | Stable |

Each approach has a place depending on the goal.

Where This Method Feels Most Effective

Rapid Concept Testing

When exploring multiple directions, speed matters more than precision.

Short Form Content Creation

For social media, emotional impact often outweighs technical perfection.

Visual Story Prototyping

Early-stage storytelling benefits from quick visual drafts.

Observed Constraints And Tradeoffs

Dependence On Prompt Clarity

Ambiguous descriptions often produce less satisfying results.

Occasional Motion Artifacts

In some cases, movement can feel slightly unnatural.

Need For Multiple Attempts

Refinement often comes through repetition.

These are expected behaviors in generative systems.

How Photo To Video Reflects A Larger Shift

The concept of Photo to Video is not just a feature. It represents a broader shift in how content is created.

Instead of building motion step by step, the system infers motion from intent.

This reduces friction but also introduces variability.

A New Kind Of Creative Workflow

From Execution To Direction

The creator becomes:

- a director of intent

- a designer of prompts

rather than an editor of frames.

From Precision To Exploration

The focus shifts toward:

- trying ideas quickly

- iterating freely

- refining direction

Why This Matters Now

The barrier to motion content is lowering.

That means:

- more creators entering the space

- faster cycles of experimentation

- new formats emerging

The tools themselves are not the most important part. The shift in how ideas are expressed is.

And that shift is already happening.