The search landscape is transforming faster than most people realize. ChatGPT, Google’s AI Overviews, Perplexity, and other AI-powered search tools are changing how people find information online. Yet amid all this disruption, one truth remains constant: technical SEO still matters. Its significance continues to grow.

Many website owners assume that AI search represents such a radical departure from traditional search that old rules no longer apply. They’re wrong. The technical foundation that made websites discoverable to Google’s algorithms is the same foundation that makes them accessible to AI systems. Without it, even brilliant content becomes invisible.

Understanding the New Search Reality

Traditional search engines showed users a list of links. AI search tools aim to provide direct answers, synthesizing information from multiple sources into conversational responses. This shift feels substantial from a user perspective, but the underlying mechanics of how these systems access and evaluate web content haven’t changed as much as people think.

AI search tools still need to crawl websites, understand their structure, parse their content, and determine relevance. They still respect (or should respect) robots.txt files, sitemap protocols, and canonical tags. The difference is what happens after they gather information. They summarize and synthesize rather than simply ranking and displaying.

This means technical SEO isn’t becoming obsolete. It’s becoming more critical because AI systems need clean, well-structured data to work with. The rule of garbage in, garbage out holds for AI just as firmly as it did for traditional algorithms.

Site Speed and Performance

AI crawlers operate under similar constraints as traditional search bots. They have limited time and resources to spend on each website. Slow-loading pages frustrate users and consume more crawler resources, making them less likely to be fully indexed and understood.

Google’s Core Web Vitals, which measure page experience, remain important for AI search just as they have for traditional search. Key metrics to consider include:

- Largest Contentful Paint: It shows how fast the main content becomes visible to users

- First Input Delay: It indicates how quickly a page responds when a user interacts with it

- Cumulative Layout Shift: It tracks unexpected layout changes that can disrupt the user experience

These elements work together to shape how efficiently users can access and use your content.

A website that takes eight seconds to load annoys visitors and also creates barriers for AI systems trying to extract information quickly across thousands of pages. Optimizing images, minimizing code bloat, leveraging browser caching, and using content delivery networks are no longer optional nice-to-haves. They’re requirements for being properly understood by AI search systems.

Structured Data

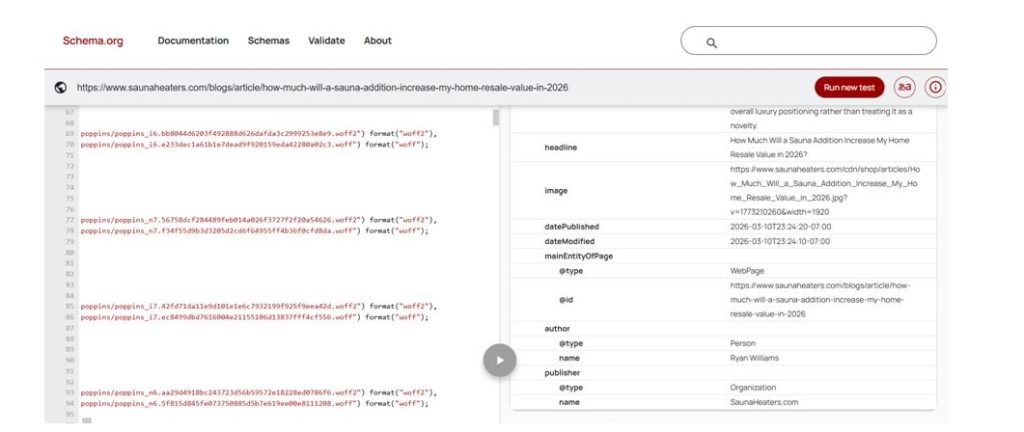

If technical SEO had a secret weapon for the AI era, structured data would be it. Schema markup provides explicit information about content in a format that machines can easily parse and understand. While human readers can infer that a page is about a recipe, AI systems benefit enormously from structured data that explicitly labels ingredients, cooking times, and nutrition information.

The irony is that structured data has been available for years, yet adoption remains surprisingly low. Only about 30% of websites implement schema markup properly. Those that do gain significant advantages in how AI systems interpret and utilize their content:

Source: SaunaHeaters.com

- Product schema helps ecommerce sites communicate pricing, availability, and specifications

- Article schema aids publishers by marking headlines, authors, and publication dates

- Local business schema supports brick-and-mortar establishments with hours and locations

- Review schema adds credibility markers through ratings and testimonials

- Event schema provides temporal context for time-sensitive content

The websites that invested in comprehensive structured data years ago aren’t scrambling to adapt to AI search. They’re already speaking the language these systems prefer.

Clean Site Architecture Guides AI Understanding

Website structure influences how AI systems comprehend the relationships between different pieces of content. A logical hierarchy with clear parent-child relationships helps these systems build accurate mental models of what a website covers and how different topics relate to each other.

Flat site architectures, where everything sits at the same level, create confusion. Deep architectures, where important content sits several clicks away from the homepage, often get overlooked. The ideal structure resembles a pyramid: broad categories at the top, specific content below, with clear pathways connecting related information.

Internal linking strategies matter tremendously in this context. They distribute link equity and also create semantic connections that help AI systems understand topic relationships. A blog post about “beginner running tips” that links to articles about “choosing running shoes” and “preventing shin splints” signals to AI that these topics connect logically.

Breadcrumb navigation, clear URL structures, and consistent categorization all contribute to making site architecture comprehensible. These elements have always been SEO best practices, but their importance amplifies when AI systems need to quickly map and understand an entire website’s knowledge graph.

Mobile Optimization Remains Non-Negotiable

The shift toward mobile-first indexing wasn’t just about accommodating smartphone users. It reflected the reality that most web browsing happens on mobile devices. AI search tools follow the same pattern. They primarily access mobile versions of websites.

Responsive design, mobile-friendly navigation, and touch-optimized interfaces matter for AI crawlers just as they do for mobile users. A desktop-only website or one with separate mobile and desktop versions creates fragmentation that AI systems struggle to reconcile.

Mobile optimization also intersects with performance. Mobile networks often have less bandwidth than broadband connections, making page speed even more critical. AI systems accessing sites over mobile connections face the same constraints, potentially limiting how much content they can efficiently process.

HTTPS and Security Build Trust Signals

Security protocols communicate trustworthiness to both users and automated systems. HTTPS encryption has become table stakes for any serious website, but its implications extend beyond protecting user data.

AI systems need to evaluate source credibility when synthesizing information. Security signals contribute to those credibility assessments. A website still running on HTTP in 2026 raises red flags about maintenance, professionalism, and reliability — factors that influence whether AI systems choose to cite or reference that content.

Certificate errors, mixed content warnings, and security vulnerabilities don’t just deter visitors. They create trust deficits that impact how AI search tools evaluate and utilize content.

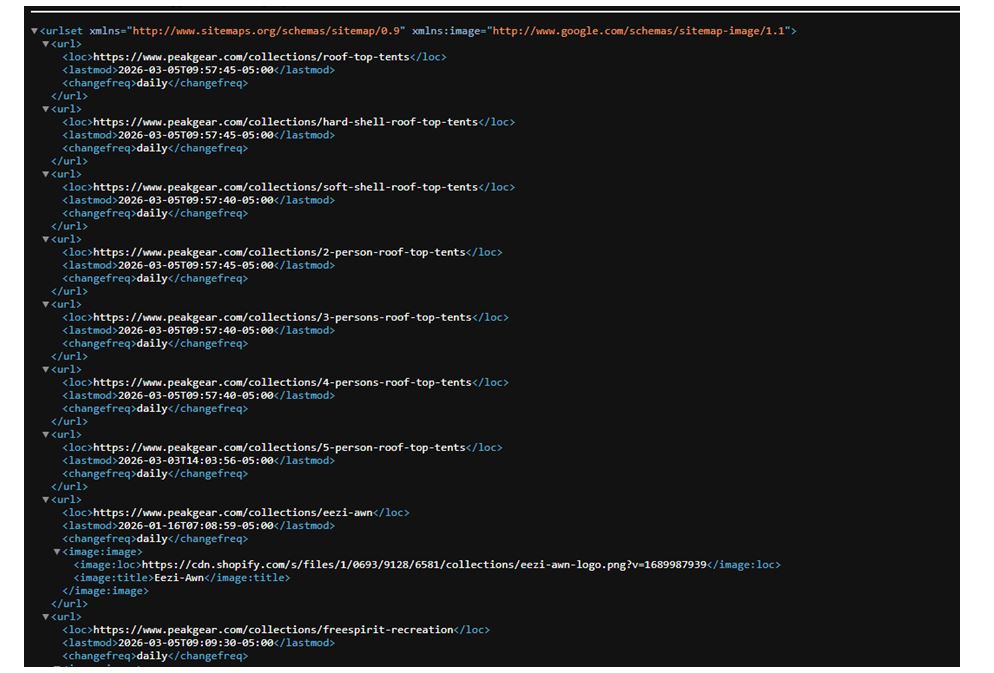

XML Sitemaps Guide Efficient Crawling

Source: PeakGear

Sitemaps provide AI crawlers with roadmaps to website content. They indicate which pages exist, when they were last updated, and their relative importance. This efficiency matters when systems need to process millions of websites regularly.

Proper sitemap implementation includes excluding duplicate content, marking priority pages, and updating modification dates accurately. These details help AI systems allocate crawl resources effectively and understand content freshness.

Dynamic sitemaps that automatically update when content changes ensure AI systems discover new information quickly. Static sitemaps that haven’t been updated in months leave systems working with outdated maps of website content.

Canonical Tags Prevent Confusion

Duplicate content has always complicated search engine optimization. For AI systems trying to extract and synthesize information, duplicates create even bigger problems. Which version should they reference? How do they reconcile minor differences between similar pages?

Canonical tags solve this by explicitly declaring the preferred version of content. They tell AI systems, “This is the authoritative source for this information — ignore the variants.” Without proper canonicalization, AI tools might extract information from inferior versions or become confused by contradictory signals from near-duplicate pages.

Ecommerce sites with product pages accessible through multiple URLs, blogs that syndicate content, and websites with printer-friendly versions all need robust canonical tag strategies.

The Compounding Advantage

Technical SEO improvements compound over time. A faster website becomes easier to crawl more frequently. Better structured data leads to a richer understanding. Cleaner architecture enables more comprehensive indexing. These advantages stack and reinforce each other.

Websites that neglect technical SEO face compounding disadvantages. Slow speeds limit crawl depth. Poor structure creates comprehension gaps. Missing structured data forces AI systems to guess at meaning. These issues multiply and magnify over time.

The gap between technically optimized websites and neglected ones will likely widen as AI search becomes more sophisticated. Early investment in technical foundations pays dividends that extend far into the future.

Moving Forward in the AI Era

The transition to AI-powered search doesn’t require abandoning everything learned about SEO over the past two decades. It requires doubling down on technical fundamentals while recognizing that these foundations now serve dual purposes: they make content accessible to both traditional search engines and emerging AI systems.

Smart website owners aren’t choosing between optimizing for Google or optimizing for ChatGPT. They’re building technically sound websites that perform well across all search contexts. The same clean code, logical structure, and clear signals that helped traditional SEO now power AI search success.

Technical SEO was never really about gaming algorithms or finding shortcuts. It was always about creating websites that clearly communicate their content and purpose to automated systems. That mission hasn’t changed — it’s just become more important as those automated systems grow more powerful and prevalent.

Author Bio

Andy Beohar is the Managing Partner at SevenAtoms, a premier San Francisco-based ecommerce SEO agency. SevenAtoms excels at helping SaaS, Tech, and Ecommerce businesses achieve exceptional growth through paid search and paid social campaigns. Andy strategizes and executes high-impact paid search marketing strategies that drive measurable results. Connect with Andy on LinkedIn or Twitter!