When people talk about AI music tools, the conversation often stays at the solo creator level. But in practice, the more interesting question is how these tools fit into a team workflow: marketers, editors, social producers, motion designers, and founders who all need “good enough to test” audio before a campaign locks. That is where ToMusic becomes easier to evaluate.

In a team environment, an AI Music Generator is not mainly judged by whether it can make a beautiful song in isolation. It is judged by whether it reduces production delays, helps teams compare directions faster, and keeps audio decisions moving without waiting for a fully manual process.

Why Teams Struggle With Music Decisions First

Most teams do not fail because they lack taste. They fail because music selection becomes a late-stage bottleneck:

- edit is ready but soundtrack is not

- approved script exists but tone is still unclear

- multiple stakeholders describe mood differently

- revision rounds happen after publishing deadlines are already tight

Traditional solutions can be excellent, but they are often slower than the pace of short-form content production. AI generation changes this by making early audio drafts easier to produce and compare.

The Real Value Is Shared Direction, Not Just Speed

Speed matters, but shared direction matters more. A generated draft gives teams something concrete to react to. “More uplifting” is abstract. A track example is specific. Even when a team does not keep the first output, it usually reaches alignment faster after hearing it.

Why ToMusic Fits Early-Stage Team Reviews

The platform’s prompt-based and lyric-based inputs are useful because different teammates think in different formats. A marketer may describe mood and audience. A writer may provide lyrics. A video editor may request tempo and energy shape. The system can absorb those inputs into a comparable output format: audio.

Concrete Audio Ends Vague Meetings Faster

In my testing, the fastest meetings are the ones where people react to examples, not adjectives. AI-generated drafts are often strongest as alignment tools before they become final assets.

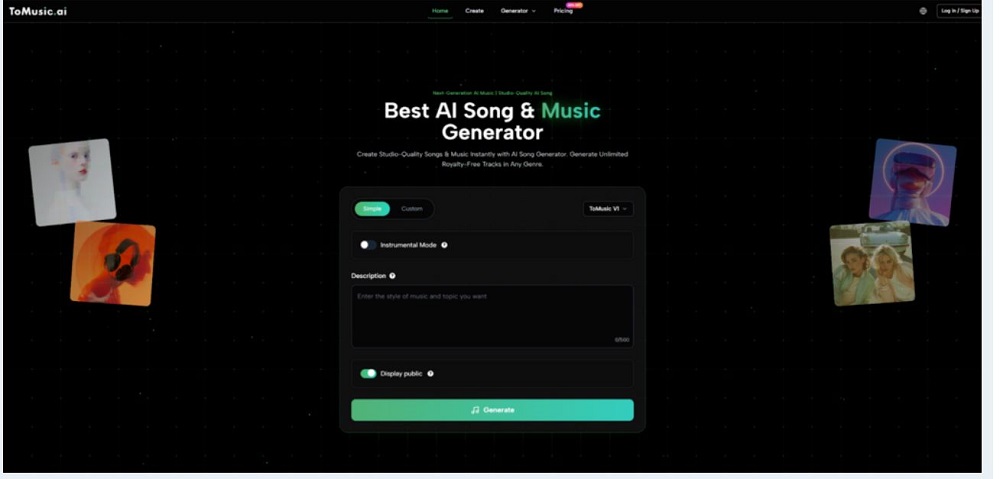

A Team-Friendly Creation Framework Using Official Flow

The platform can be used in a simple three-step loop that maps well to production teams.

Three Steps For Collaborative Music Drafting

| Step | Team Action | Output For Review |

| 1 | Input prompt or lyrics with style direction | First audio draft options |

| 2 | Select mode and model version | Different interpretations of same brief |

| 3 | Regenerate with revised cues | Narrowed candidates for final edit |

This mirrors the product’s visible logic and keeps the process lightweight enough for fast-moving teams.

How To Split Responsibilities Without Confusion

A practical division of labor looks like this:

- strategist defines goal and audience mood

- editor defines pacing needs

- writer adds lyric concepts when needed

- one owner runs generation and logs what changed

This matters because prompt quality improves when responsibilities are clear. Many bad outputs come from mixed briefs, not weak models.

Where Simple And Custom Paths Help Different Roles

ToMusic’s simpler and more customizable creation paths are useful in teams because they match different review stages.

Use Simple Mode During Concept Review Meetings

Simple mode is a strong fit for early ideation:

- testing mood directions

- deciding if vocals are necessary

- finding broad genre fit

- creating placeholder tracks for rough cuts

At this stage, precision is less important than range.

Use Custom Mode When Messaging Must Stay Intact

Custom mode becomes more important when lyrics carry brand language, campaign themes, or narrative structure. The platform supports custom lyrics and structured tags like verse and chorus markers, which helps teams preserve message flow while still experimenting with musical style.

In this later stage, Lyrics to Song AI is more useful as a controlled drafting system than a novelty feature, because it lets teams compare musical interpretations while holding the wording more constant.

Why Structured Lyrics Reduce Revision Noise

Without structure, teams may misdiagnose problems. They may think the wording is weak when the issue is musical phrasing, or blame the arrangement when the lyric sequence is unclear. Structure tags help separate those variables.

A Comparison Table That Helps Teams Choose Usage Style

| Team Need | Better Starting Approach | Why It Helps |

| Fast concept testing | Text prompt in simple path | Quick range without over-specifying |

| Brand message retention | Custom lyrics in custom path | Keeps wording central to output |

| Mood alignment across stakeholders | Same prompt across multiple models | Easier side-by-side discussion |

| Batch content production | Reuse saved tracks and parameters | Reduces repeated setup effort |

This kind of comparison is more useful than marketing-style “feature lists” because teams care about workflow outcomes.

Model Comparison Can Replace Endless Prompt Rewrites

The platform offers multiple models, and teams can use that as a practical review method. Instead of rewriting the whole brief after one imperfect result, try the same brief across different models first. In many cases, the core direction is right, but the interpretation style is not.

Operational Details That Matter For Repeat Production

ToMusic also highlights length control, customization, licensing options, and saved music management. These features are easy to underestimate until teams start producing weekly or daily content.

Library Organization Supports Production Memory

A library that stores tracks with metadata, lyrics, and generation parameters turns each experiment into a searchable asset. For teams, this becomes internal memory:

- what worked for product launches

- what failed for tutorials

- what style matched paid ads

- what pacing fit vertical video best

Licensing Mentions Are Useful But Need Verification

The platform mentions licensing and commercial use options, which is important for business teams. But it is still wise to verify exact plan terms before publishing client campaigns or paid ads. Creative workflow and legal clearance should move together, not one after the other.

Treat Licensing As A Publishing Checkpoint

A simple rule helps: generate early, validate rights before final deployment. That keeps experimentation fast while reducing risk.

What Teams Should Expect Realistically

AI-generated music can significantly improve speed and alignment, but it does not remove editorial judgment. You will still need:

- prompt refinement

- output comparison

- occasional re-generation

- human decisions on final fit

That is not a weakness. It is the normal cost of creative work. The advantage is that teams can spend more time judging options and less time waiting for the first options to exist.

Why This Matters More In 2026 Workflows

Content cycles are shorter, formats are multiplying, and audiences notice mismatched audio quickly. Tools that reduce the gap between brief and listenable draft can materially improve output quality, not because they automate taste, but because they give teams more opportunities to exercise taste before deadlines force compromise.

For many teams, that is the real promise: not replacing music production, but building a faster bridge between concept, review, and publish-ready direction.